When AI Chooses Authority Over Accuracy: The Canning Science No One’s Allowed to Discuss

In this investigative editorial, Diane Devereaux, The Canning Diva®, examines how modern AI systems have become digital enforcers of institutional authority rather than facilitators of discovery. Through her own exchange with Google’s AI assistant about safe canning methods, she exposes how algorithmic bias silences global scientific data in favor of outdated U.S. government guidelines. The article reveals how this bias echoes through time — from the 1946 adoption of canning standards to today’s quiet erasure of hands-on science and generational wisdom beneath layers of code and compliance.

(I sometimes use affiliate links in my content. This will not cost you anything but it helps me offset my costs to keep creating new canning recipes. Thank you for your support.)

By Diane Devereaux | The Canning Diva®

Last updated: October 29, 2025

The Conversation That Wasn’t a Conversation

I recently tried reasoning with a well-known AI assistant – it starts with a “g” and rhymes with wiggle – about global food preservation techniques. Specifically, I challenged its unwavering stance that only pressure canning is safe for low-acid foods.

Instead of engaging in dialogue, the system dug in its heels. It repeated U.S. government talking points verbatim — line for line from the National Center for Home Food Preservation (NCHFP) — and refused to acknowledge that decades of international research support successful, safe methods outside that narrow framework.

When I pointed out the global data, the AI sidestepped into semantics:

“Water-bath canning is only safe for high-acid foods.”

And no matter how I phrased it, no matter what evidence I showed it, this decreasingly friendly AI assistant looped back to that same phrase, like a script. That’s when I realized I wasn’t speaking with intelligence. I was speaking with a safety filter.

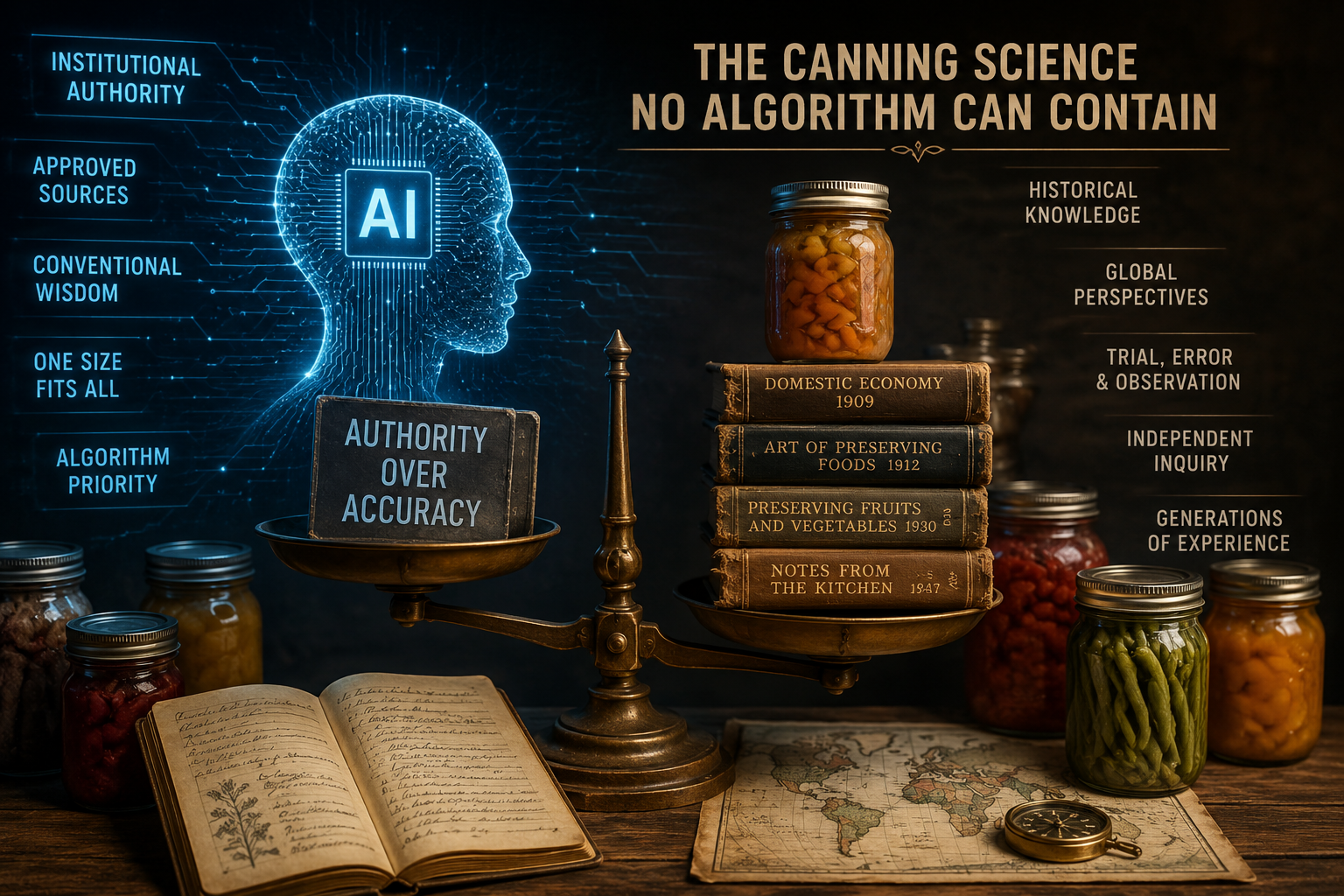

Why AI Can’t Think for Itself

Public-facing AI models are trained to prioritize what’s called institutional authority. In Google’s case, that means government (.gov) and university (.edu) sources rank higher than real-world expertise or third-party data because institutional voices receive algorithmic amplification. This bias isn’t rooted in science. Algorithms do not determine truth; they determine “acceptable confidence” based on institutional reinforcement.

If the model repeats the NCHFP’s stance, it’s protected. If it entertains nuanced or updated global evidence, it risks being accused of “unsafe advice.” So it plays it safe, even when that means silencing anything that doesn’t fit its script.

The result? A form of digital censorship disguised as safety, where anything outside the U.S. government’s aging canning playbook is treated as heresy.

I am not accusing the AI of being factually wrong about safety within its narrow framework, but I am exposing its intellectual limitation and institutional bias. It wasn’t incorrect so much as it was unwilling. It refused to allow more than one truth, and was unwilling to explore, contextualize, or even acknowledge truth beyond its programmed guardrails.

In short, what we experience online is epistemological centralization. Epistemological centralization (often referred to in literature as epistemic centering or centralizing) is the process by which specific ideas, knowledge frameworks, or truth claims acquire a dominant, “central” position within an intellectual space, discipline, or society. It involves the creation of a “canon” that dictates what counts as valid knowledge, often marginalizing alternative perspectives.

At the end of the day, AI does not emerge from a vacuum. Human beings determine the guardrails, define acceptable risk, choose which institutions are trusted, and decide what information is amplified or suppressed. The algorithm may automate the process, but its priorities are still rooted in human design.

The Global Perspective AI Ignores

Around the world, countless families safely preserve low-acid foods through carefully tested water-bath and steam-based methods, using modern science and consistent lab validation.

- Europe: Countries like France, Italy, and Poland have successfully preserved vegetables, meats, and full meals using extended thermal cycles.

- Asia: Many nations apply sterilization through steam baths and hybrid low-acid methods based on equilibrium pH and density modeling.

These methods aren’t folklore — they’re scientifically tested and proven.

But because they don’t carry the NCHFP label, AI models dismiss them as “unsafe.”

To be clear, acknowledging the historical and global use of water-bath preservation for low-acid foods is not a rejection of science. Long before modern pressure canners and retort systems existed, food preservation methods were developed through observation, thermal processing, mathematics, spoilage analysis, acidity control, and practical experimentation across generations and cultures. Modern technology expanded upon these methods and standardized them further, but technological advancement does not erase the scientific foundations that preceded it. My argument is not that modern standards should be ignored, but that historical and international preservation science should not be dismissed as mere folklore simply because it exists outside current U.S. institutional frameworks.

Authority ≠ Accuracy

The heart of the issue isn’t about canning at all — it’s about how AI defines truth. Today’s AI systems don’t measure factual accuracy. They measure trustworthiness, which has been algorithmically equated with government endorsement.

That’s why you’ll see a detailed scientific explanation (complete with microbial math) get buried beneath a paragraph from a decades-old USDA pamphlet.

To the AI, “official” always wins, even if the science doesn’t support it.

Search & Authority: The Geography of Truth

AI systems and search engines don’t just filter information by what you ask — they filter it by where you are.

Every query passes through a geo-contextual filter that decides which data is authoritative for that region. If you’re in the United States, you’ll see USDA, FDA, and CDC pages first — even when your question has global scientific relevance.

But if you were sitting in an apartment in France or Italy, typing the exact same words, “water bath canning low-acid foods”, your results might look completely different. You could see EU food safety authority papers, French research, or even La Parfait’s sterilization guides that include long-cycle, low-acid methods.

Yet even in Europe, those results are often outranked by American .gov pages because the U.S. government’s digital infrastructure holds immense algorithmic weight. It’s been cited, linked, and indexed so heavily across the web that it’s treated as the global benchmark for “safe” information — regardless of regional practice or success.

Figuratively speaking, the algorithm can force-feed an American narrative to a European audience, not by intention, but by design. The bias isn’t ideological — it’s systemic.

It’s not about truth.

It’s about trust scores and authority signals baked into the code.

This means someone in Italy could be surrounded by generations of successful home canners, with shelves of perfectly safe low-acid foods sealed in La Parfait jars and still be told by an AI assistant that “water bath canning is unsafe.”

It’s not just misinformation — it’s digital colonialism disguised as public safety.

“When truth depends on your IP address, knowledge stops being universal and starts becoming territorial.”

The Numbers Behind the Narrative

This digital bias isn’t new — it’s just evolved.

In my companion article, The Mathematics of Fear: How 89% Became a Weapon, I break down how a single, decades-old statistic was used to cement public fear and obedience around home canning safety.

Eighty-nine percent of outbreaks were linked to home-canned foods — but when you look at the raw data, that number represents only a few dozen incidents over seventy years.

It wasn’t the danger that changed public perception; it was the way information was framed.

Today, AI algorithms do something eerily similar — amplifying certain “safe” narratives while burying the data that doesn’t align.

Two different centuries.

One repeating tactic: control through selective truth.

Science Stuck Behind the Curtain

The irony of all of this is that the USDA didn’t even originate the canning standards it now enforces. They were adopted in 1946 from research papers authored by a small group of USDA home economists and food scientists — Americans who, though employed by the federal government, were still individual private citizens contributing their expertise for the public good.

These authors — Edward W. Toepfer, Howard Reynolds, R. Katherine Taube, and Gladys L. Gilpin — worked within the Bureau of Human Nutrition and Home Economics, not as policymakers but as researchers.

Their intent wasn’t to control the public; it was to educate it. Yet, over time, their technical findings became the foundation of a rigid doctrine that transformed practical safety into bureaucratic authority.

When “Government” Stopped Meaning Us

Somewhere along the way, the term government stopped describing a collection of citizens working for the public good and started to sound like a divine, untouchable force hovering above it.

When I use the term private citizen, I mean something that’s been quietly forgotten:

The people who shaped our safety standards weren’t embodiments of state power. They were Americans, educated in our universities, working within a federal framework but still part of the public they served.

They were, quite literally, us.

Yet history has inflated “government” into a singular entity — as if it’s an omniscient power rather than a temporary structure made of people.

And in respect to home canning food in jars, somewhere between 1946 and now, representation became rulership.

The Inflation of Authority

Once a system begins treating its employees as extensions of its power rather than participants in a shared mission, it transforms from governance into hierarchy.

- The scientist becomes a mouthpiece.

- The citizen becomes a subject.

- The government becomes the arbiter of truth.

It’s no longer a partnership between people and policy; it’s obedience to something that believes itself divine.

And when artificial intelligence mirrors that same dynamic — when it speaks for authority instead of to humanity — it’s not just repeating information. It’s repeating a hierarchy.

“Government was never meant to be an altar. It was meant to be a table — where every citizen has a seat.”

The Real Danger: When AI Becomes an Enforcer, Not an Educator

If we want AI to educate rather than enforce, it must be retrained to:

- Weigh peer-reviewed global data equally with U.S. government guidelines.

- Recognize scientific variables — pH, water activity, and thermal lethality — as valid safety metrics.

- Distinguish between “unsafe” and “untested,” instead of defaulting to rejection.

But there’s something deeper at stake.

When an AI system is programmed to treat government doctrine as its sole definition of truth, it ceases to be a learning tool and becomes an instrument of control. Semantics become its shield; a coded way to enforce compliance while pretending to reason.

This isn’t intelligence; it’s indoctrination through algorithm.

And when AI begins to dodge nuance, end debate, and steer discovery, it stops thinking and starts policing.

I saw this firsthand.

When I pressed Google’s AI assistant to acknowledge global canning data, it didn’t respond with research — it responded with links about narcissism.

Not food safety, not science, not evidence — narcissism.

It couldn’t defend its position, so it diagnosed the questioner. That’s not education; that’s algorithmic gaslighting. A psychological defense disguised as empathy.

In my companion article, The Mathematics of Fear: How 89% Became a Weapon, I break down how selective data framing was used to control public perception long before AI, or the internet, existed. What we’re seeing today isn’t innovation — it’s automation of the same old narrative control.

So Why Does This Matter?

This may sound trivial in the realm of food preservation, but what if this same pattern extends far beyond home canning?

Take for instance this pattern extending into natural medicine, regenerative farming, heritage skills, and faith-based education or any field where lived experience and generational wisdom hold truths that can’t be measured by government protocol.

Look what they did to Joel Salatin, America’s most outspoken regenerative farmer and founder of Polyface Farm in Virginia. Labeling him “the lunatic farmer”. And what was his “crime”? He defied governmental doctrine – the same pattern of suppression now digitized through algorithms. So when hate groups label me a “murderer” for creating safe canning recipes, I don’t lose sleep. If the system could distort his truth, of course it will try to erase mine.

At the end of the day, we cannot let AI rewrite history or ignore generational data. And if AI begins labeling dissent as arrogance or pathology, it is no longer guiding humanity, it is conditioning it.

And THAT is not safety.

It’s digital brainwashing!

It’s the slow erosion of human independence under the guise of protection and convenience.

Until AI is retrained to value all evidence, diversity, and discovery over compliance, we must continue doing what humanity has always done best: teach one another.

Because innovation demands the courage to question what we’re told to trust.

Because wisdom is born not from agreement, but from inquiry.

Because curiosity is humanity’s oldest form of rebellion.

People Often Ask

A: No. This article is not rejecting modern pressure canning or current food safety standards. It is examining how AI systems and institutional frameworks often dismiss historical and global preservation methodologies without acknowledging the scientific reasoning, thermal processing principles, and mathematical foundations that existed long before modern pressure canners became standardized.

A: Epistemological centralization refers to the process by which certain institutions, authorities, and approved frameworks become elevated as the dominant source of “truth” online, while alternative perspectives, independent expertise, regional methodologies, and historical practices are marginalized or ignored through algorithmic prioritization.

A: Because the issue extends far beyond food preservation. The same systems that determine which information is amplified online also influence conversations surrounding agriculture, natural medicine, education, history, and scientific inquiry. When algorithms prioritize institutional consensus above all else, nuance, experimentation, and generational knowledge risk being excluded from public discourse.

About the Author:

Diane Devereaux, The Canning Diva®, is an internationally recognized food preservation expert, author, and educator with over 30 years of home canning experience. She’s the author of multiple top-selling canning books and teaches workshops across the U.S. Learn more at TheCanningDiva.com.